I recently left my job at the Wikimedia Foundation (WMF) to head up engineering at MTTR. I’m proud of the hard work my team at WMF did, making it easier for developers to get content and data from Wikipedia and its sister projects.

I want to highlight the API Platform that we built, and celebrate its completion in December 2020. If you don’t want to read my analysis, just run over to api.wikimedia.org and check it out yourself. (I did a tech talk about the API work in June 2020, and if you like videos, that might work better for you.)

What’s an API?

Web APIs are the way that we let programs running on different computers share data and tell each other what to do. A good example is the Twitter API, which lets the official Twitter apps, and apps from other developers, access people’s feeds and post on their behalf.

Companies make APIs for a lot of reasons. One of the best is to encourage emergent behaviours in development — letting people outside their organisation try new things with the same data. It’s a technique that has been especially successful at Wikipedia over the years; a lot of the editing and spam-fighting work on wiki projects is done via API.

The Problem

When I started at WMF in 2018, APIs were all over the map in terms of quality and technology choices. They hadn’t been organised with an intentional API strategy, and had largely been built piece by piece to meet the needs of individual client developers at a particular time. Our API endpoints were spread across 600+ per-project API servers, and our API documentation was scattered across many web sites.

My team was tasked with putting our organisation’s best foot forward in terms of API technologies. We wanted to set a new standard for APIs to meet within the organisation, and gradually migrate our internal and external clients to the new system.

Most of all, we wanted to let new developers onboard to Wikimedia APIs in a similar way that they use Twilio, Google, Facebook or Twitter.

The Solution

One thing about establishing a best practice is that it involves making choices. We had most of the technologies we needed, and the effort was mostly subtractive: choosing some tools and discouraging the use of others.

- A unified API server. Instead of having API endpoints scattered around different project domains like fr.wikibooks.org and en.wikipedia.org, we set up a single (virtual) API server at api.wikimedia.org. All our APIs would be organised in a tree under that domain, making it conceptually easier for developers to find and use API endpoints. The API server acts as a gateway to internal servers, so we can have a variety of implementations with a single virtual interface.

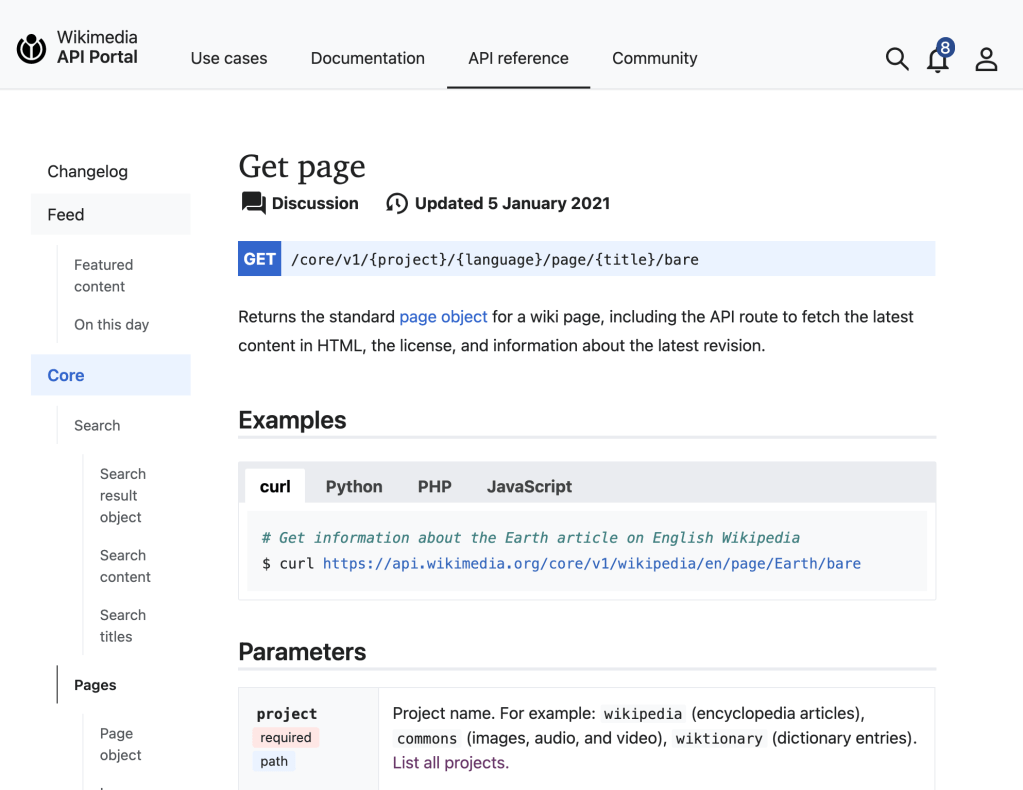

- REST. Our older, idiosyncratic RPC-style API has been a barrier to entry for a number of developers. For the new API service, we standardised on REST, which has been the dominant paradigm for APIs on the Web for a decade or so. Although there are some tempting new technologies for query-style APIs, we decided that we’d try to get firmly into the 2010s before we started experimenting with the technologies of the 2030s. Because our core API was of an older era, we built a new core API that uses a RESTful paradigm for reading and writing key wiki data types.

- JSON. Again, this is a conservative choice, but we set a standard that only endpoints using JSON would be part of the new API. It’s a single, easy representation for most simple and complex data formats.

- OAuth 2.0. One particular pain point with our existing APIs was authentication and authorisation. We had a mix of cookie-based authentication, which is fraught with security hazards, and a homebrew token-based system, which is probably as dependable as all other homebrew Web security. We implemented OAuth 2.0 as a new authentication mechanism, and then required it for all API endpoints in the new system. We also included API key management features into the new API Portal (see below).

- Explicit rate limiting. This was one of the more controversial choices for the service. We implemented explicit rate limits for using the APIs, so that only a limited number of requests (5000, by default) could be made in a particular time period (1 hour, by default) by a single user with a single app. Previously, our organisational policy had been to discourage overuse of the Wikipedia and sister project servers, without explicit direction as to what “over use” meant. The way you’d find out that you were making too many API calls was if you started getting error messages or if your IP address was blocked. It’s a lot better to know ahead of time what the acceptable limit is!

- Semantic versioning. Another explicit problem we heard from client developers was that our API endpoints would change their behaviour unexpectedly without much warning. Although our teams had developed an intricate hierarchy of marking different endpoints as “experimental” or “deprecated” at different times, this wasn’t always clear in documentation. So, we clarified this by committing to a semantic versioning policy. That means that if you start working with a particular version of the API — say, 1.1 — any later versions will only add new endpoints or new properties to existing endpoints, and your current code will keep working as expected. If there are any incompatible changes, we’ll make a major version revision — to 2.0, say. Most of all, version numbers are embedded in the URLs for API endpoints, so anything at /core/v1/… will always be backwards-compatible.

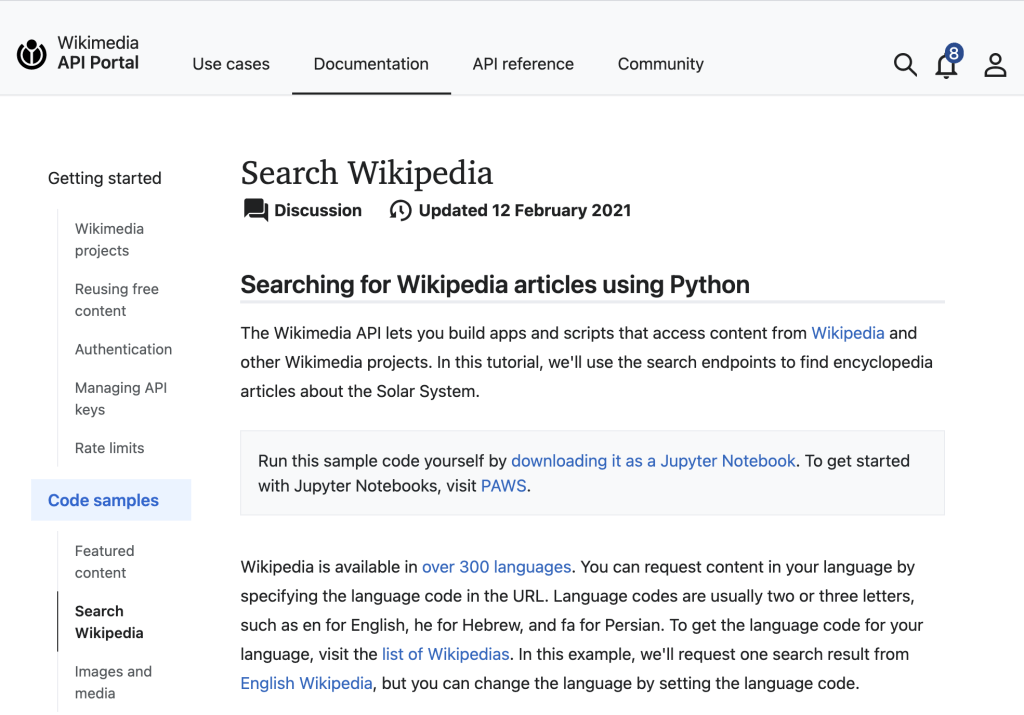

- Documentation. One of the biggest pain points for Wikimedia developers was API documentation. In this case, we really went all out. Our documentation specialist put together a great, extremely organised API portal that covers high-level topics, specific code examples, API references, and API use cases. And the API key sign-up and management is all integrated into the same portal, so it’s really a one-stop shop for developers working with Wikipedia data and content.

The Results

I love the way the API Portal site turned out. It’s got a clean, simple interface that is really inviting and reminds me of other developer portals from leading Internet platforms.

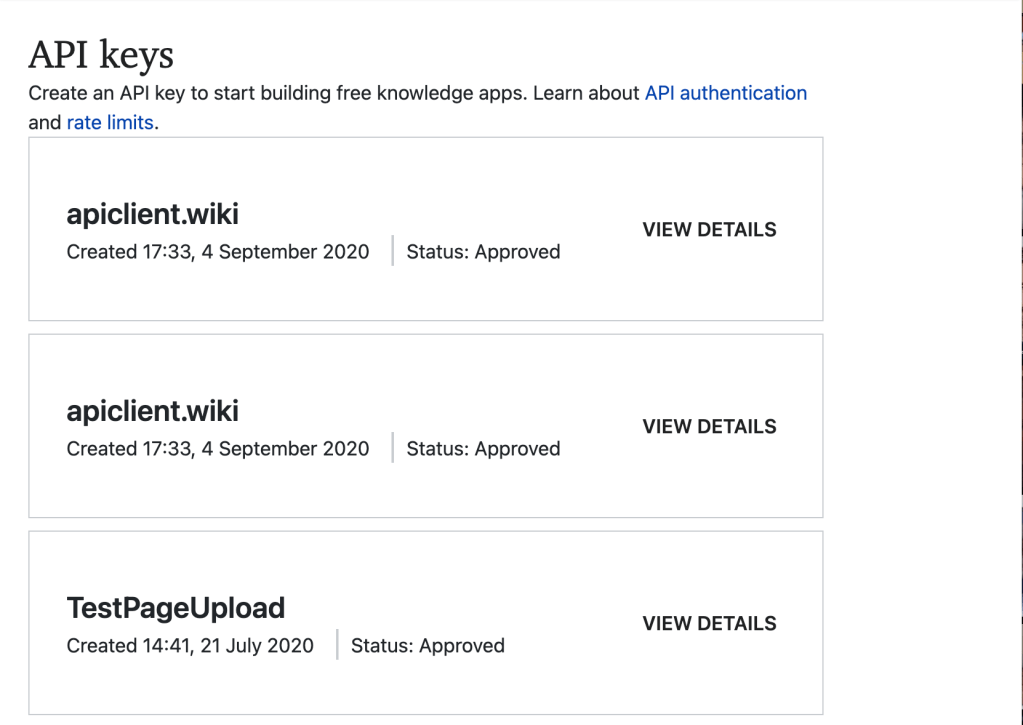

You can log in with your Wikipedia user name and password, or register for a new one. And then it’s really easy to configure your Wikimedia API keys.

The reference documentation is nicely formatted and easy to use.

And there’s some great case studies in how to do simple tasks with different programming languages.

Trying it out

One of the fun parts of my job as a product manager is getting to test out the technologies we build. For the new API platform, I built a Web-based single-page API client that runs on the Github Pages platform. apiclient.wiki lets you browse and search English Wikipedia with a dynamic Web interface.

You can see the code at github.com/wikimedia/apiclient-wiki.

What happens next

First, I hope that people on the Internet give the folks at the Wikimedia Foundation the kudos they deserve for making and releasing this great platform. It’s hard to look forward to the future and make the API system that’s needed for Wikipedia’s next decade, and I’m proud of all the blood, sweat and tears that the Platform team and my other colleagues put into making this platform happen.

Second, I hope to see interesting and complex software clients built to use this new system. If you’re curious, I suggest signing up on the Portal, setting up your first API keys, and trying to build a sample app. Our guess was that many, many types of apps would benefit from integrating Wikipedia data and content into the experience; once you try the API, you may come up with new ideas we never thought of.

It’s also worthwhile to keep an eye on the API portal to see what’s coming next. When I left, we were expecting new APIs to be coming from Wikidata and other parts of the organisation. I hope to see other, more vertical endpoints coming out in the future, directed at particular use cases in the Wikimedia community.

If you have questions, comments or suggestions, please check on the API Portal community pages to see how to get involved. (I can’t really answer questions about Wikimedia any more, but if you’d like to know about MTTR, drop me a line…).

It was nice to have you back at Wikimedia as long as it lasted, but cogratulations for MTTR! That seems very exciting.